In early 2026, SaaStr founder Jason Lemkin shared a detailed overview of his organization’s deployment of artificial intelligence agents. The headline figure — $254 per month in direct costs to run two agents that assumed responsibilities previously held by vice-president-level staff — naturally drew significant attention. However, the operational reality behind these numbers offers a more complex and practical lesson for organizations considering similar shifts.

This article examines the SaaStr case objectively, separating the measurable outcomes from the broader requirements of maintaining such systems, and extracts practical lessons for management.

What SaaStr's AI Agent Deployment Actually Teaches Us

The SetupLink to The Setup

SaaStr currently manages over 20 AI agents in production with a core team of three people. Two specific agents illustrate the model:

- "10K" (AI VP of Marketing): Handles campaign planning and execution, daily updates on go-to-market strategies, performance tracking, drafting content, and providing budget recommendations.

- "Qbee" (AI VP of Customer Success): Manages relationships with more than 100 sponsors, handles communications, monitors project completion, manages internal workflows, and identifies exceptions that require human intervention.

Both agents were developed internally, using industry-standard software tools and historical data from the previous 4 to 10 years of SaaStr’s operations.

The Cost Structure: Understanding Total OwnershipLink to The Cost Structure: Understanding Total Ownership

The combined monthly runtime cost for these agents is $254. By comparison, hiring two senior leaders would typically incur an annual cost of $500,000 to $800,000 in total compensation.

However, a complete financial picture must include the upfront investment. SaaStr reports an annual investment of over $500,000 in AI infrastructure and development. For instance, the "10K" agent required weeks of iterative development and comprises over 14,000 lines of code.

Consequently, the first-year total cost of ownership is roughly equivalent to hiring two senior leaders. The economic advantage becomes clear in the second year and beyond, as development costs are spread out while runtime expenses remain low.

Lesson: The economics of AI agents are cumulative. Comparing monthly runtime costs to annual salaries is a starting point, but a multi-year analysis provides a more accurate view of the investment.

Output Quality: When Agents Influence StrategyLink to Output Quality: When Agents Influence Strategy

A significant result from the deployment occurred when the marketing agent (10K) proposed a change to event pricing. The leadership accepted the recommendation, resulting in a 41% increase in event attendance and a 6% increase in revenue.

This example demonstrates that agents can contribute beyond simple administrative tasks. When provided with sufficient historical data and clear performance targets, agents can offer analytical and strategic recommendations. The challenge for organizations, therefore, is not whether agents are capable of strategic work, but whether they have the necessary decision-making frameworks to review and authorize such recommendations.

The Maintenance Reality: Capacity for OversightLink to The Maintenance Reality: Capacity for Oversight

SaaStr estimates that each production agent requires the equivalent of half of a full-time employee’s attention for ongoing maintenance. This includes refining instructions, updating context, fixing integration errors, and monitoring output quality.

The maintenance requirements vary by function:

- Marketing (10K): Requires daily adjustments. Marketing is dynamic and creative, meaning instructions need to be updated as campaigns change and tone requires regular recalibration.

- Customer Success (Qbee): Requires maintenance a few times per week. Because the work is based on consistent patterns, the focus is primarily on updating knowledge bases and addressing system changes.

With 20 agents in operation, SaaStr’s lean team of three humans must prioritize which agents receive the most oversight.

Lesson: Scaling the number of agents inevitably scales the maintenance workload. Organizations that deploy multiple agents without a clear prioritization model risk a portfolio of software where individual systems do not receive enough supervision.

The Nature of Management: Humans vs. AgentsLink to The Nature of Management: Humans vs. Agents

The SaaStr case highlights a shift in what it means to be a manager. Managing a senior human employee involves significant time spent on tasks that do not directly produce business output: career development, conflict resolution, coordination meetings, and performance reviews.

Conversely, managing an agent consists of refining instructions, assessing quality, and tuning systems. In this context, maintenance and production are effectively the same activity. Every hour spent managing the agent results in an immediate improvement to the output. This changes the traditional "span of control" model, as the management of agents consumes less overhead time and focuses entirely on the quality of work produced.

Automation vs. AutonomyLink to Automation vs. Autonomy

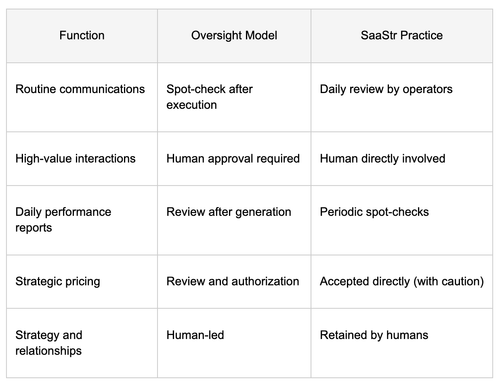

SaaStr reports that Qbee handles 90–95% of repeatable tasks for sponsor management, yet human operators review the output daily. High-value interactions remain strictly under human control.

It is important to distinguish between automation and autonomy. An agent can automate a high volume of tasks while still operating under strict human supervision. The two concepts are independent. The SaaStr model suggests that the primary design question for organizations is not "is this agent autonomous?" but "what type of oversight does this specific function require?"

Build vs. BuyLink to Build vs. Buy

There is a substantial price gap between building agents internally (low monthly runtime costs) and purchasing commercial agent products (often costing $25,000+ per month).

The higher cost of commercial products covers the infrastructure, engineering teams, security compliance, and service guarantees. Internal builds shift those costs to the organization’s own engineering capacity. The decision to build or buy is therefore not just a cost calculation; it is a choice about where to allocate your internal resources. Organizations with strong engineering capabilities may find internal builds effective, while others may find the commercial option more economical when the opportunity cost of internal time is included.

Applying a Structured FrameworkLink to Applying a Structured Framework

The SaaStr experience suggests that three dimensions are reliable predictors of a successful deployment:

- Repetition: How often does the task occur? The most successful agents handle continuous or daily tasks. The economic value of automation increases as the marginal cost per execution approaches zero.

- Standardizability: How predictable is the work? Highly predictable, pattern-based tasks are easier to maintain. Work that is contextual or creative requires more frequent tuning and higher maintenance.

- Oversight requirements: What level of involvement is necessary? Successful deployments design the oversight model (auditing, approval, or direct human control) into the workflow from the start.

Business OutcomesLink to Business Outcomes

As of May 2026, SaaStr reports the following outcomes:

- 20+ production AI agents across various functions.

- A team of 3 humans managing the entire agent portfolio.

- A total monthly cost for the AI software stack of approximately $2,300.

- A transition in revenue growth from -19% year-over-year to +47% year-over-year.

- An estimated 70% reduction in human hours dedicated to operational tasks.

These results are specific to SaaStr’s environment—a media and events company with a focused team and significant internal engineering resources. Other organizations should adapt these findings to their own specific context and constraints.

Key TakeawaysLink to Key Takeaways

-

Agent economics are cumulative. First-year costs may be high due to development, but advantages compound in subsequent years.

-

Agents can provide strategic value. When well-informed by data, agents generate actionable insights, requiring organizations to have clear review and approval frameworks.

-

Maintenance is a constant. Scaling agents increases the human management load. Prioritization is necessary to ensure quality.

-

Management shifts to output-focused work. Managing agents is different from managing people, as time spent maintaining the agent directly improves the final product.

-

Automation does not imply autonomy. High levels of automation can and should coexist with rigorous human oversight. The design of that oversight is a critical success factor.